It can be useful to think of quality as ‘fitness for use’, and to measure this with quality criteria for bug reports, code and user stories. But I’m going to take a different angle in this post, and look at how a wider picture of quality influences not just the execution, but also the direction of work with regards to the ieDigital Banking Platform.

I’ve been in the business long enough to remember corporate cultures that suffered from ‘silo syndrome’. I still cringe at the memory of one particular company Christmas party, where R&D sat at one table, consultancy at another, and everyone else at a third. You can imagine – if you have a suitably twisted imagination, or have suffered suitably traumatic experiences – how much sand this threw into our day-to-day work. I think that while quality is certainly about ‘fitness for use’ and applicable criteria at one level, at another level, quality is also about the corporate value chain, the streams of product, vision and communication that enable us to deliver something to our clients, which in turn makes it worth them paying our salaries. And it’s about how effectively our work and arrangements fit in to this value chain.

One striking example of something that gives no direct benefit to our clients is time spent configuring machines, whether by developers, QA or operations. In fact, this is a classic source of friction, especially as different departments evolve different configurations to suit their disparate needs, making it ever more difficult to pass software up the line. So, when I was given the task of automating tests for Interact’s Go services and consumers, we agreed to include a requirement that this should require zero (or near zero) configuration.

Let’s see how our Go test automation project contributes to quality in both the ‘fitness for use’ and the ‘value chain’ senses.

Version control

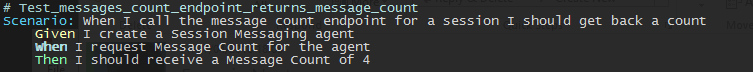

Firstly, Go test automation contributes to quality in the fitness for use sense by allowing comprehensible test definitions like:

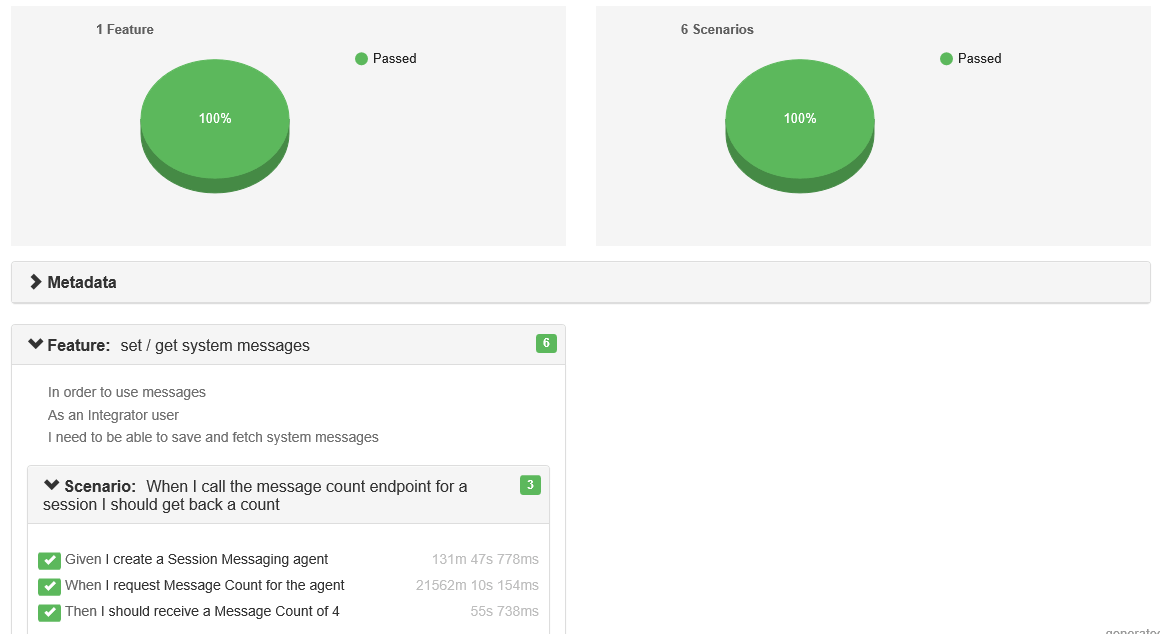

And running the tests automatically in TeamCity, and reporting the results graphically, like:

Secondly, it contributes to quality in the ‘value chain’ sense by exploiting Docker to minimize server configuration requirements, and by using a Make file so that any task that has to be executed both by developers and by our CI tool, TeamCity (such as building Docker images from the system under test, importing Docker images of other needed Interact components, and then running them all together as a local test and development environment) are automated in just one way, in just one place (in the Make file, to be exact).

What’s nice about this compared to the traditional approach of hard-coding these steps in TeamCity, is that all this code is now in version control alongside the components themselves.

So while the test side of this task addresses (as it must) quality as ‘fitness for use’, I hope that the work we’ve put into making this build/test/deploy automation portable to all stages of the development/QA /Ops cycle will improve quality in the value chain sense, too.